Building a Time-Aware Retrieval Architecture

A Quantrium Case Study

Context

Traditional Retrieval-Augmented Generation (RAG) Systems powered enterprise queries with data from distributed sources. However, they often failed to deliver the exact version required at the right moment. This gap led to significant operational disruptions and compliance risks, that became impossible to ignore as enterprises transitioned RAG from experimental chatbots to mission-critical workflows.

Clients(s)

A US-based Registered Investment Advisory (RIA) Firm deploying an internal AI agent for compliance reviews, audits, and investment operations.

A US-based Technology Company building platforms and infrastructure for RIAs, needing accurate, permission-aware intelligence across clients and regulatory data.

A US-based EdTech Company focused on HQIM (High-Quality Instructional Materials), integrating AI into district-level systems to deliver insights on curriculum adoption, teacher performance, professional development, and root-cause analysis for student outcomes.

All three Clients partnered with Quantrium for a pilot deployment across their highly regulated and data-rich environments.

Business Challenge

The Latest Version Only Problem

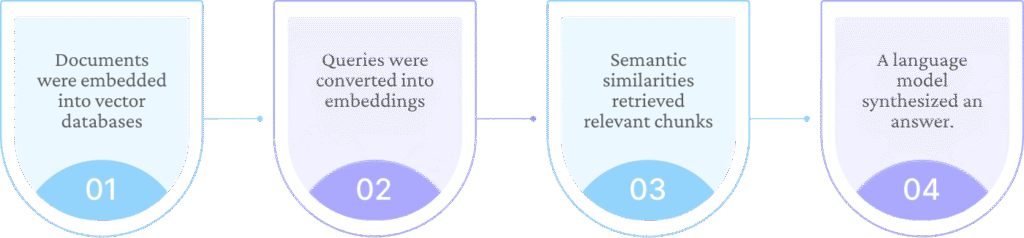

The RAG pipelines in all these enterprises followed a familiar flow that worked well for their present-tense questions.

Example

What did our data retention policy require in Q2 2024?

The Flow

The Response

The standard RAG system retrieved the most semantically similar document – typically the policy in force -and generated an answer that was consistent with the guidelines at that given point of time.

The Issue

The problem started when the questions about past requirements were answered using present-day documents. When Quantrium implemented this flow across all three projects, they realized that the responses generated had the same issue- they were accurate, but factually incorrect for the question asked.

- In the RIA pilots, this surfaced during mock audits.

- In platform teams, it emerged when debugging customer-reported issues tied to older configurations.

- In the EdTech environment, it became apparent when districts inquired about the evolution of instructional guidance across academic terms.

The root cause in all these cases was consistent:

- The AI systems were fast, but not always right.

- Time was not a first-class retrieval dimension.

Quantrium Tech

Curated Articles from Quantrium’s Tech Blogs.

Enterprises Don’t Have a Data Problem-They Have an Access Problem

The Quantrium Way Forward

Quantrium identified that the issue faced by all the implementations went beyond retrieval speed. The real gaps were caused by temporal correctness, context awareness, and trust.

To address this problem, Quantrium designed and implemented an Enterprise Agentic System that combined:

- Agentic AI for autonomous decision-making.

- Time-aware RAG for historical accuracy.

- MCP (Model Context Protocol) to connect securely with enterprise tools and data sources.

- Context-aware memory to optimize prompts, decisions, and LLM cost efficiency.

A Time-Aware Retrieval Architecture

Key features of this model included:

Version Index

Treating documents and datasets as temporal objects, it mapped document states to time ranges. And when a document changed, the system created a new version rather than overwriting the old one. This way, each version got time-stamped, indexed, and linked to its validity window and enabled natural-language queries like:

- “What did this policy say in December 2024?”

- “What guidance was active during the last academic year?”

- “Which configuration applied before the March update?”

The Enterprise Agent interpreted the temporal context, queried the version index, retrieved the correct historical version, and used that version, rather than the latest one, during response generation.

Delta Versioning Technique

This technique helped in managing the scalability and storage efficiencies by retaining the base version +structured change sets, as opposed to complete copies of every version.

This effectively enabled the reconstruction of any historical data on demand.

Each version also carried metadata – who changed it, when, why, and under which approval workflow. It also became a part of the retrieval context, enabling deeper questions such as: “Show policy updates approved by the compliance committee in 2024.”

Enterprise Agent + MCP: Connecting to the Real World

Time-aware RAG alone wasn’t enough because there was more to enterprises than documents. Quantrium built an Enterprise Agent using MCP (Model Context Protocol) to connect securely to a wide range of systems:

- Structured databases (SQL / NoSQL)

- Document repositories and policy systems

- Internal APIs and operational tools

- Analytics and reporting platforms

- Domain-specific systems (financial, educational, operational)

MCP provided a standardized, auditable way for the Agent to:

- Discover available tools and data sources.

- Understand schemas and access boundaries.

- Retrieve relevant information required for a given task.

- Maintain clear separation between models and enterprise systems.

This allowed the Agent to operate as a controlled, enterprise-grade orchestrator, and not as a free-floating LLM.

Quantrium Flux

Your front-row seat to the ever-evolving world of technology.

In all its depth and diversity.

Can Gen AI make movies faster and cheaper?

Permission-Aware, Multi-Source Retrieval

When a user submitted a query, the Enterprise Agent was designed to:

- Authenticate the user.

- Map their role, department, and entitlements.

- Execute retrieval only within those boundaries.

This prevented the users from accessing information through the AI layer when they couldn’t access it directly.

The connector layer integrated this with:

- Structured databases via permission-aware query generation.

- Document stores through authenticated APIs.

- Internal services using scoped service accounts.

- Vector stores with metadata-based access filtering.

- Real-time systems for operational data.

A Compliance Officer, an Operations Analyst, and a Teacher asking similar questions could now receive different answers, drawn from different systems and framed at different levels of detail.

Collectively, this improved the relevance of the information retrieved and ensured that every interaction was logged for auditability.

Agentic Tool Orchestration

Rather than following fixed retrieval paths, the Enterprise Agent was enabled to take autonomous decisions – like identifying the right tools based on the query to:

- Select tools based on reasoning, temporal relevance, data freshness, source authority, and precision required.

- Cross-validate results across sources.

- Flag discrepancies rather than generating a confident but incorrect answer.

Context-Aware Memory and LLM Cost Optimization

A context-aware memory was used as a critical layer across all pilots.

The Enterprise Agent maintained short- and medium-term memory about:

- User roles and recurring tasks

- Frequently accessed systems

- Ongoing investigations or workflows

- Prior clarifications and constraints

- Recognition and storage of key points and decisions

- Domain-specific vocabulary

- Learning from past feedbacks

- Cache FAQs

This memory was used to:

- Optimize prompt construction.

- Reduce redundant retrieval calls.

- Minimize unnecessary context sent to LLMs.

- Improve response consistency over time.

This, in turn, led to faster decisions, lower latency, and optimized LLM costs, especially in high-volume enterprise environments like the EdTech implementation. It also complied with various regulatory guidelines and requirements because the context-aware memory was scoped, auditable, and aligned with enterprise governance requirements.

Security in Zero-Trust Environments

Across all pilots, the Enterprise Agent operated within existing security frameworks, acting as an extension of the security policy with:

- Role-based access control aligned with Enterprise Identity Access Management (IAM).

- Encrypted communication using mutual Transport Layer Security (mTLS).

- Comprehensive audit logs covering queries, tools, sources, and versions.

- Data residency controls to safeguard information in approved regions.

Deep Dive

Retrieval-Augmented Generation (RAG) - Redefining how we interact with information

Impact

Quantrium’s time –aware Enterprise Agents effectively worked across the projects, going beyond faster answers to help:

- Compliance and audit teams reconstruct the exact information active at specific points in time.

- Legal and operations teams gain clarity while investigating historical issues.

- Educators and administrators track how instructional guidance and outcomes evolved across academic cycles.

- Knowledge workers interact with enterprise systems through a single, conversational interface, without losing precision or trust.

The system also scaled with independent and modular components.

The MCP Connector enabled the continuous incremental data ingestion from existing data sources and document stores, and the addition of new data sources and tools, eliminating the need for architectural redesign.

The pilot deployments turned AI from a helpful assistant into a trusted enterprise system, one that preserved truth across time and supported confident decision-making in complex, regulated environments.